I first saw process mining software in 2008, when Fujitsu was showing off their process discovery software/services package, plus an interesting presentation by Anne Rozinat from that year’s academic BPM conference where she tied in concepts of process mining and simulation without really using the term process mining or discovery. Rozinat went on to form Fluxicon, which developed one of the earliest process mining products and really opened up the market, and she spent time with me providing my early process mining education. Fast forward 10+ years, and process mining is finally a hot topic: I’m seeing it from a few mining-only companies (Celonis), and as a part of a suite from process modeling companies (Signavio) or even a larger process automation suite (Software AG). Eindhoven University of Technology, arguably the birthplace of process mining, even offers a free process mining course which is quite comprehensive and covers usage as well as many of the underlying algorithms — I did the course and found it offered some great insights and a few challenges.

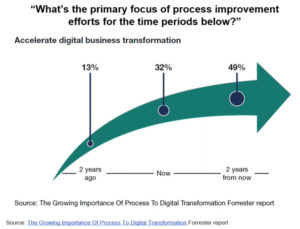

Today, Celonis hosted a webinar, featuring Rob Koplowitz of Forrester in conversation with Celonis’ CMO Anthony Deighton, on the role of process improvement in improving digital operations. Koplowitz started with some results from a Forrester survey showing that digital transformation is now the primary driver of process improvement initiatives, and the importance of process mining in that transformation. Process mining continues its traditional role in process discovery and conformance checking but also has a role in process enhancement and guidance. Lucky for those of us who focus on process, process is now cool (again).

Unlike just examining analytics for the part of a process that is automated in a BPMS, process mining allows for capturing information from any system and tracing the entire customer journey, across multiple systems and forms of interaction. Process discovery using a process mining tool (like Celonis) lets you take all of that data and create consolidated process models, highlighting the problem areas such as wait states and rework. It’s also a great way to find compliance problems, since you’re looking at how the processes actually work rather than how they were designed to work.

Koplowitz had some interesting insights and advice in his presentation, not the least of which was to engage business experts to drive change and automation, not just technologists, and use process analytics (including process mining) as a guide to where problems lie and what should/could be automated. He showed how process mining fits into the bigger scope of process improvement, contributing to the discovery and analysis stages that are a necessary precursor to reengineering and automation.

Good discussion on the webinar, and there will probably be a replay available if you head to the landing page.